January 9 ended up being a very expensive day for a Culver City, California man after he pleaded guilty to recklessly operating a drone during the height of the Pacific Palisades wildfire. We covered this story a bit when it happened (second item), which resulted in the drone striking and damaging the leading edge of a Canadian “Super Scooper” plane that was trying to fight the fire. Peter Tripp Akemann, 56, admitted to taking the opportunity to go to the top of a parking garage in Santa Monica and launching his drone to get a better view of the action to the northwest. Unfortunately, the drone got about 2,500 meters away, far beyond visual range and, as it turns out, directly in the path of the planes refilling their tanks by skimming along the waters off Malibu. The agreement between Akemann and federal prosecutors calls for a guilty plea along with full restitution to the government of Quebec, which owns the damaged plane, plus the costs of repair. Akemann needs to write a check for $65,169 plus perform 150 hours of community service related to the relief effort for the fire’s victims. Expensive, yes, but probably better than the year in federal prison such an offense could have earned him.

Another story we’ve been following for a while is the United States government’s effort to mandate that every car sold here comes equipped with an AM radio. The argument is that broadcasters, at the government’s behest, have devoted a massive amount of time and money to bulletproofing AM radio, up to and including providing apocalypse-proof bunkers for selected stations, making AM radio a vital part of the emergency communications infrastructure. Car manufacturers, however, have been routinely deleting AM receivers from their infotainment products, arguing that nobody but boomers listen to AM radio in the car anymore. This resulted in the “AM Radio for Every Vehicle Act,” which enjoyed some support the first time it was introduced but still failed to pass. The bill has been reintroduced and appears to be on a fast track to approval, both in the Senate and the House, where a companion bill was introduced this week. As for the “AM is dead” argument, the Geerling boys put the lie to that by noting that the Arbitron ratings for AM stations around Los Angeles spiked dramatically during the recent wildfires. AM might not be the first choice for entertainment anymore, but while things start getting real, people know where to go.

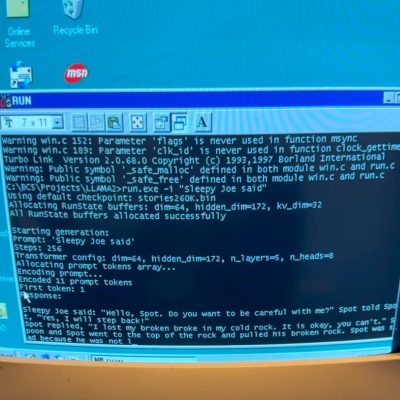

Most of us are probably familiar with the concept of a honeypot, which is a system set up to entice black hat hackers with the promise of juicy information but instead traps them. It’s a time-honored security tactic, but one that relies on human traits like greed and laziness to work. Protecting yourself against non-human attacks, like those coming from bots trying to train large language models on your content, is a different story. That’s where you might want to look at something like Nepenthes, a tarpit service intended to slow down and confuse the hell out of LLM bots. Named after a genus of carnivorous pitcher plants, Nepenthes traps bots with a two-pronged attack. First, the service generates a randomized but deterministic wall of text that almost but not quite reads like sensible English. It also populates a bunch of links for the bots to follow, all of which point right back to the same service, generating another page of nonsense text and self-referential links. Ingeniously devious; use with caution, of course.

When was the last time you actually read a Terms of Service document? If you’re like most of us, the closest you’ve ever come is the few occasions where you’ve got to scroll to the bottom of a text window before the “Accept Terms” button is enabled. We all know it’s not good to agree to something legally binding without reading it, but who has time to trawl through all that legalese? Nobody we know, which is where ToS; DR comes in. “Terms of Service; Didn’t Read” does the heavy lifting of ToS and EULAs for you, providing a summary of what you’re agreeing to as well as an overall grade from A to E, with E being the lowest. Refreshingly, the summaries and ratings are not performed by some LLM but rather by volunteer reviewers, who pore over the details so you don’t have to. Talk about taking one for the team.

And finally, how many continents do you think there are? Most of us were taught that there are seven, which would probably come as a surprise to an impartial extraterrestrial, who would probably say there’s a huge continent in one hemisphere, a smaller one with a really skinny section in the other hemisphere, the snowy one at the bottom, and a bunch of big islands. That’s not how geologists see things, though, and new research into plate tectonics suggests that the real number might be six continents. So which continent is getting the Pluto treatment? Geologists previously believed that the European plate fully separated from the North American plate 52 million years ago, but recent undersea observations in the arc connecting Greenland, Iceland, and the Faroe Islands suggest that the plate is still pulling apart. That would make Europe and North America one massive continent, at least tectonically. This is far from a done deal, of course; more measurements will reveal if the crust under the ocean is still stretching out, which would support the hypothesis. In the meantime, Europe, enjoy your continental status while you still can.